I have been through hundreds of penetration tests over the last few years, both on the testing side and on the “defending” side. Actually, in many cases I’m doing routine, internal security testing on apps that then go through a 3rd party pentest, so I can compare their results with mine. It’s probably not very surprising that BurpSuite is the tool of choice for almost everyone, because it’s reliable, feature-rich and well priced — £199 per year in this business is really cheap. Theoretically, this attractive price should leave pretty much space to apply the expertise of security consultants who run them. BurpSuite is only a tool, after all.

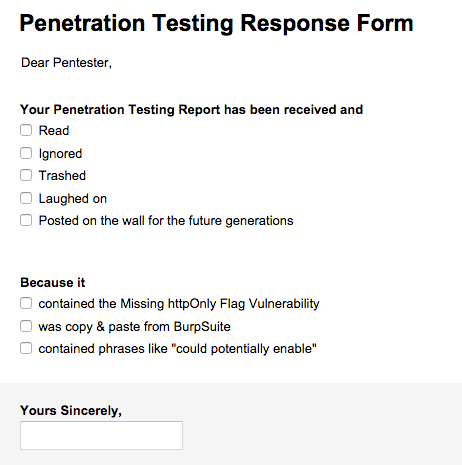

And still, majority of the 3rd party reports I have to respond to are almost completely unfiltered reports from BurpSuite, with little or no own expertise applied to them by the person who wrote them. The authors don’t seem to have made even slightest attempt to understand the context in which the application operates, which would usually allow them to turn most of the alleged “vulnerabilities” to “informationals”. And what is most worrying, these are companies with respected brands, will all kinds of national certifications.

The favourite one seems to be the “missing httpOnly on cookie”. They’re so trivial to spot for BurpSuite and the scanner won’t usuall understand the full context. For example, that the cookie does lacks the flag intentionally, because it’s intended to be accessed by the JavaScript frontend.

Or, what about reporting “missing httpOnly” or “missing Secure flag” on such a cookie?

Set-Cookie: myCookie=; path=/

Are you sure there will be any cookie left after this operation to be leaked as result of these missing flags? Well, no. And the whole “missing cookie flags” stuff is precisely about preventing a theoretical leakage of the cookie contents, either through compromised JavaScript (httpOnly) or out-of-SSL requests (Secure). BurpSuite may not know that, but the person who runs it has to.

Another popular technique of inflating reports is to turn all the “informationals” into “low risk issues”. For example, saying that “application data is cached on client browsers”. How do you know that? Well, because there’s no Cache-Control. Are you sure you really understand how browser caching works for pages loaded over TLS and does this substantiate that definite “is cached” statement?

There are some disputable ones as well: is a reflected unfiltered input apperaing in application/json really an exploitable XSS? Well, it depends and taking into account things like reflected file download I would probably want to know about it. But listing it as “reflected Cross-Site Scripting vulnerability” without a slightest risk analysis is just unprofessional.

Why is it "worse than useless?"

Penetration testing companies are hired as an independent expert that confirms correctness of programming work done by internal or external software supplier. Sponsors of these projects usually miss the expertise to assess not only the quality of the programming (well, that’s why they hire pentesters) but also of the pentesting (quis custodiet ipsos custodes).

The penetration company thus has a very responsible role in the project. They apply an expertise that is rare and seeked on the market, take quite good money for that and their advice can impact contractual relationships between the sponsor and the supplier. Penetration testing is now becoming a profession of public trust and its practitioners really should not be midnlessly copying whatever an automated scanner outputs.

Inflating the pentest reports in an attempt to prove that “you’ve done your job” is really bad practice because it results in waste of time on all sides and can put the whole project on risk much bigger than a “missing flag in cookie”. If the software development team knows what they’re doing, they can defend and prove to their stakeholders that the alleged “issues” are non-issues, presenting a proper risk analysis and so on — but it’s really your job, not theirs. In the worst case such inflated reports will result in redirecting resources on polishing something that is already not vulnerable to comply with some imaginary “best practices” (best — for what environment? what business context?).

Remember, listing a non-exploitable theoretical attack vector as a “medium issue” will not “increase awareness” in the development team. It will only waste everyone’s time for pointless discussions and proofs that white is indeed white (this is exactly why Google has published a list of non-qualifying bug reports). Instead of a highly theoretical risk of “potentially leaking a session cookie” you will have a certain, materialised risk of non-delivered project.

How should an ideal pentesting report look like?

First and most important, put these “could be a risk”, “might allow” , “could potentiall enable” and all that weasel talk on your blacklist. Penetration testing companies are paid for reducing uncertainity, not increasing it by speculation.

Second, strictly distinguish vulnerabilities from missing safeguards. These are not the same. Vulnerability is an open path to violation of application’s security model or business logic, which you can immediately demonstrate or which is at least highly likely (you don’t see the source code, but you saw the error response). Proof of concept is a nail in the coffin in the case, but if you can provide sufficient circumstantial evidence it’s also perfectly fine.

On the other hand, a missing safeguard is something that should be done in the application in order to defend against possible attacks. All kinds of defense-in-depth and “best practices” will fit there. These should be suggested, but remember that each application can be improved and hardened almost indefinitely (TLS→STS→HPKP→stapling→verified stapling… where do you stop?), but the project managers must balance the security features with user experience. Why not report them as “suggested improvements” instead of “low risk issues”? This will still demonstrate the effort you’ve put into the testing without introducing the nervousness associated with the word “issue”?

It’s also very important that the report contents are relevant to the tested application. You can usually see the whole application’s traffic, so you can easily verify if a cookie is being accessed by JavaScript frontend or not. If you still copy these “could potentially allow leaking” then you’re just proving that you’ve got no idea what you’re doing. And if you don’t understand the application’s business logic or internals (which is fully justified as you’ve probably only saw it for the first time), just schedule a call with the development team.

Update

There was some discussion on Reddit on that topic and also two absolutely amazin “pentesting horror” stories: Our security auditor is an idiot. and The World’s Worst Penetration Test Report.

People on Reddit also pointed out that “penetration testing” is different from “vulnerability assessment”. I can’t agree more with this. Unfortunately the name of “penetration testing” as a “goal-oriented” attempt to see if “someone can break in” was long time ago taken over by the vulnerability scanner operators and I’ve just blindly followed this trend because I hear this every day.